BigML’s upcoming release on Wednesday, December 15, 2021, will be presenting a new set of Image Processing resources to the BigML platform. In this post, we show you how to build a simple image classifier on the BigML Dashboard. Let’s start!

Image classification is a supervised learning technique for images. Image classification models are trained to identify various classes of images and have a tremendous amount of applications as touched on in our prior posts. As such, BigML introduces image data support with the latest Image Processing release. In this post, a simple application of image classification is built from scratch, which shows how image classification is achieved on the BigML Dashboard, with ease, speed, and accuracy.

When I walk in my neighborhood I see a lot of beautiful flowers — many neighbors enjoy gardening. Lilies are especially popular. With large and colorful blooms, lilies are prominent in any front yard. But recently I was told some of the “lilies” I saw were actually daylilies, not lilies. I’m not a flower person, let alone a botanist, so it’s beyond my expertise to know which are which.

I decided to build an image classifier using BigML to help us identify whether a flower is a lily or daylily. This way, we don’t have to understand difficult technical terms, e.g. petals vs. sepals. Plus, this author is a firm believer that “a picture is worth a thousand words!”

Preparing the Data

I went on the Internet, found and downloaded pictures of lilies and daylilies, 108 of each.

First, you need to label the pictures because image classification needs labels to build models. BigML provides many flexible ways to label your images. The most straightforward way is to organize the images by folders, with the folder names being the labels or the classes.

As seen above, you can put the pictures into two folders. All daylily pictures are in the “daylily”, and all lily ones are in the “lily” folder. With this structure, the folder names will become the labels of the images respectively.

Now, select both folders and compress them into a zip file. Or if on the command-line, issue such a command:

zip -r lily-or-daylily.zip lily daylily

Uploading the Data

You can drag and drop the zip file to the BigML Dashboard for uploading. Alternatively, if your data is on the cloud, you can perform a remote upload by using its URL.

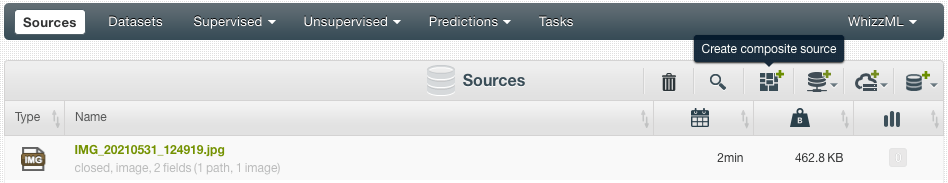

Once the zip file is uploaded, an image composite source is created:

An image composite source is a collection of image sources. Clicking on the composite source from the source list view above, I get three views of it, which is selectable by clicking on the three tabs on the left under the “FORMAT” heading. The default view is the “Fields” view, which displays the fields of the composite source:

As expected, one of the fields is “label”, whose values were taken from the folder names in the data.

The “Sources” view lists all the component sources of the composite, that is, all the image sources:

You can click an individual source to view its image and related details:

In the “Images” view, you can see all the images and their labels:

In this view, you can also select images and correct their labels.

When an image composite source is created, BigML analyzes the images and automatically generates a set of numeric features for each image. Those features appear as added fields in the composite source. You can configure different sets of image features. Some capture low-level features such as edges and colors while the pre-trained CNNs capture more sophisticated features. In addition to training a deepnet as an image classifier, we will use one of the pre-trained CNNs to create a different image classifier.

Clone the image composite, which creates a new image composite as a copy of itself:

Then, from the newly cloned source, go to “Configure source”. In the “Image analysis” panel, select “ResNet-18” from the “Pre-trained CNN” dropdown list and deselect “Histogram of gradients”, which was the default choice:

Rename the composite source to “lily-or-daylily resnet18”. After the composite is updated, you can see that it contains 512 “IMAGE FEATURES” fields:

Creating Datasets

The composite sources are ready now. By using the 1-click dataset option in the cloud action menu, create two datasets, one from “lily-or-daily”, another from “lily-or-daily resnet18”:

After a dataset is created, in its detailed view you can see the field summaries, some univariate statistics, and the corresponding field histograms.

In the histogram of the image_id are handy mini previews of the images, which can be changed by reloading. You can easily see the distribution of the label classes from the “label” field histogram. The red exclamation point denotes that the field of “filename” is set to non-preferred automatically, which means it won’t be used when training a model. On top of the field names, you can also see the “label” was assigned as the objective field automatically.

Image feature fields are hidden by default to reduce clutter, because there are typically at least several dozen of them. There is an icon “Click to show image features” next to the “Search by name” box, which I can click to see those fields.

Before you create models, split each dataset into two datasets so that you can use one to train models while using the other for evaluation. BigML provides a 1-click “Training|Test Split” option, which randomly sets aside 80% of the instances for training and 20% for testing.

Creating Models

Deepnet is the BigML resource for deep neural networks. When creating a deepnet from a dataset containing images, it will train a particular type of deep neural network, a Convolutional Neural Network (CNN), and all extracted image feature fields will be ignored.

From the training dataset “lily-or-daylily|Training [80%]”, use the 1-click deepnet option from the cloud action menu to create a deepnet using default parameter values.

While CNNs are excellent in image classification, their training times can be long especially when the dataset has thousands of images or more. Image features generated by pre-trained CNNs can capture sophisticated features and are therefore effective for both supervised and unsupervised models. For image classification, you can use image features to train other supervised models such as for ensembles or logistic regressions, which usually take much less time.

Following this logic, the dataset “lily-or-daylily resnet18” has 512 image feature fields generated by pre-trained CNN “ResNet-18” (Having tried five different pre-trained CNNs available, I decided to use ResNet-18 for this application). From its training set “lily-or-daylily resnet18|Training [80%]”, create a 1-click logistic regression.

Evaluating the Models

After the deepnet “lily-or-daylily|Training [80%]” is created, you’re presented with the image deepnet page:

You can see lots of useful information about the deepnet, including its algorithm and parameters. The main focus of the page is the performance from a set of sampled instances. This set of instances were used during the deepnet training for validation. You can go over the images that were classified correctly, as well as the images classified incorrectly. However, this is not a true evaluation. In order to measure the deepnet’s true performance (how good it is at classifying images not seen in training) you need to create a BigML evaluation. For this, go to the cloud action menu and click on “EVALUATE”,

You can see:

The test dataset is the one we split from the original dataset and it has 44 instances, about 20% of the 216 images. The 80% training dataset we used for creating the deepnet has 172 instances. Click on the “Evaluate” button to create an evaluation:

The overall accuracy of the deepnet is 93.2%. We also did an evaluation of the logistic regression “lily-or-daylily resnet18|Training [80%]”:

The accuracy of the logistic regression is still very high at 90.9%, just a bit lower than the accuracy of the deepnet. But keep in mind: when the number of images reaches thousands, deepnets can take hours to train while logistic regressions take only minutes.

Classifying New Images

I took 9 pictures of flowers around my neighborhood, and now I’m eager to classify them. Here, we’ll only show the steps to use the deepnet. It’s very similar if using the logistic regression, but just remember to configure new images to have “ResNet-18” image features.

Drag ‘n drop the new pictures to the BigML Dashboard, they will be created as image sources.

From the deepnet page, click on the “PREDICT” option on the cloud action menu:

This brings the “prediction form” of the deepnet we created, where you can select an uploaded image and classify it.

Use the “Select image” dropdown menu item, pick the image you want to classify, and then click on the “Predict” button.

You can see that the selected image is classified as lily with a probability of 99.28%. Another one was classified as daylily with its probability at 91.31%.

Of course, you can also use BATCH PREDICTION to classify multiple images. First, you need a dataset containing the images so click on “Create composite source” on the action bar in the source list view:

Select the uploaded images as the components. See that I took 9 pictures here:

Then you can create a composite source, naming it “neighborhood-flowers”:

After creating a 1-click dataset from the composite source, go to our deepnet, and find in the cloud action menu “BATCH PREDICTION”:

Pick the “neighborhood-flowers” as the dataset, then configure the batch prediction output — you only need those fields of “image_id”, which link to the image “classified” (or predicted) and its probability.

Next, click on the “Predict” button to create the batch prediction:

You can download the batch prediction as a CSV file. By default, BigML also generates an output dataset containing the batch prediction results. Clicking on the “Output dataset” button will jump to the dataset view. As an aside, the “Scatterplot” view of the dataset is very good for inspecting the results, which displays thumbnail images and their classified labels at the same time.

In the “Scatterplot” view, each instance is plotted as a dot on the chart. Two colors represent two classes and the probabilities fall between 0.90 and 1.00. If you mouse over a dot, its images, its classified label, and its probability are highlighted in the “DATA INSPECTOR” panel on the right.

I’m happy to report that after completing these steps, I checked with my neighbors who planted those flowers and they confirmed that the classification results from the 9 pictures were all correct. Mission accomplished!

Summary

We set out to build an image classifier on the BigML Dashboard to help identify two similar flower species. We downloaded the images from the Internet and used them to create two composite sources and their datasets. From one dataset, we created a deepnet which is a Convolutional Neural Network. Another dataset has a set of pre-trained CNN image features, and we used it to create a logistic regression. Using the pictures I took around my neighborhood, both models helped me classify lily/daylily with high accuracy. The whole process shows how easily, quickly, and accurately image classifiers can be built on the BigML Dashboard. This is remarkable: knowing nothing about flowers except how to download images of them, we were able to create a computer program to classify them accurately with the Machine Learning power of the BigML platform.

Do you want to know more about Image Processing?

Be sure to visit the release page of BigML Image Processing, where you can find more information and documentation. There are also links to other blog posts on related topics, such as composite sources and image labeling for your convenience. Feel free to join the FREE live webinar on Wednesday, December 15 at 8:30 AM PST / 10:30 AM CST / 5:30 PM CET. Register today, space is limited!

7 comments