A while ago, when I was studying at the university, my professor shared a nice story about a fruit import and export company that was concerned about their quality control system for watermelons, which was starting to falter. Some watermelons that weren’t yet ready to be consumed were escaping their controls. I immediately thought the reason would be data drift or overfitted models, thus, they would need to retrain their Machine Learned models. However, my professor mentioned that their quality control system was, in fact, an 80-year old man who had the amazing ability to know if a watermelon was ready to be consumed only by hitting it and listening to the sound the watermelon made. Although I found this way of working was pretty peculiar, this quality control system could also use some data-driven insights that would lead to some room for improvement.

Quality control remains an issue for many companies. Even subtle variances during processes in assembly lines can cost companies huge amounts of money down the line. Fortunately, BigML can help with that. In this post, we will show how to use image feature extraction and unsupervised learning to find defects in images from a manufacturing process. Specifically, we will focus on the casting process. Casting is a manufacturing process in which a liquid material is usually poured into a mold, which contains a hollow cavity of the desired shape, and then allowed to solidify.

Using Anomaly Detection to Find Casting Defects

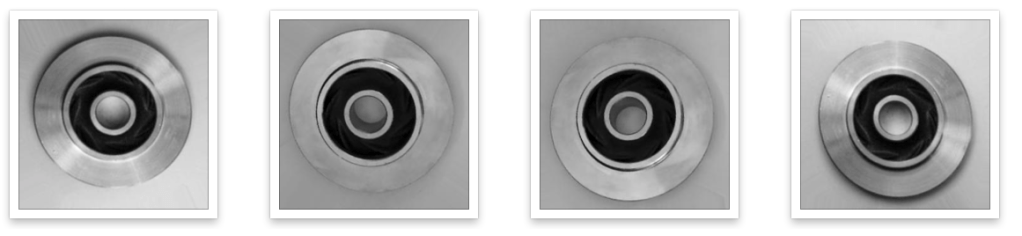

The dataset we’re going to use, originally from Kaggle user ravirajsinh45, contains several images of pump impellers that were made using metal casting. For this example, we will use a reduced version of that dataset with just 112 images, which can be found here. Common pump impellers look like this:

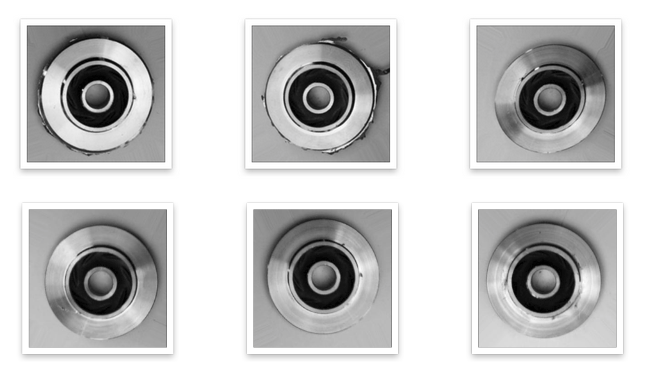

Out of those 112 images, 106 are fine but the remaining 6 pump impeller images contain some defects that render them unusable since they look as below:

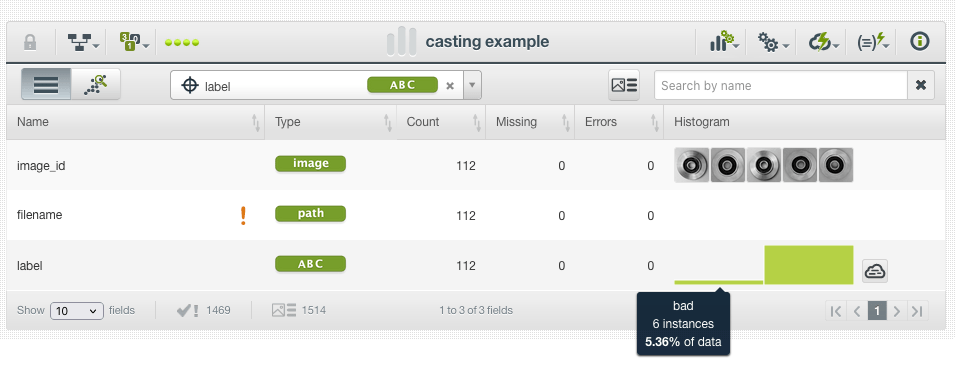

Please note that those defects are sometimes tiny and hard to catch by a human eye, but BigML can greatly help us in this task. We first upload the .zip file with the images. Since good/bad images are already separated into folders, BigML automatically labels all instances in our composite source. If you need a refresher, feel free to read our Image Labeling blog post for more details.

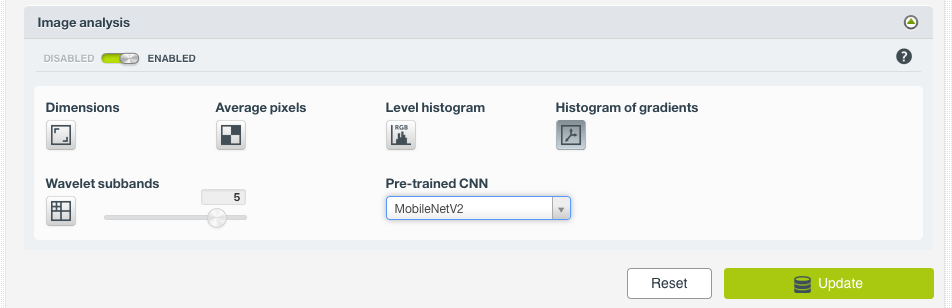

Before creating the dataset, we need to modify the default Image Analysis options from our composite source. As this is a complex problem with many possible kinds of subtle defects, we prefer to use the Histogram of gradients and MobilenetV2 as feature extractors:

We then update the source and create a dataset (note that the source is automatically closed as we do so). As mentioned above, we’re working with a highly imbalanced dataset, with only 6 bad (defective) instances out of the 112 total images.

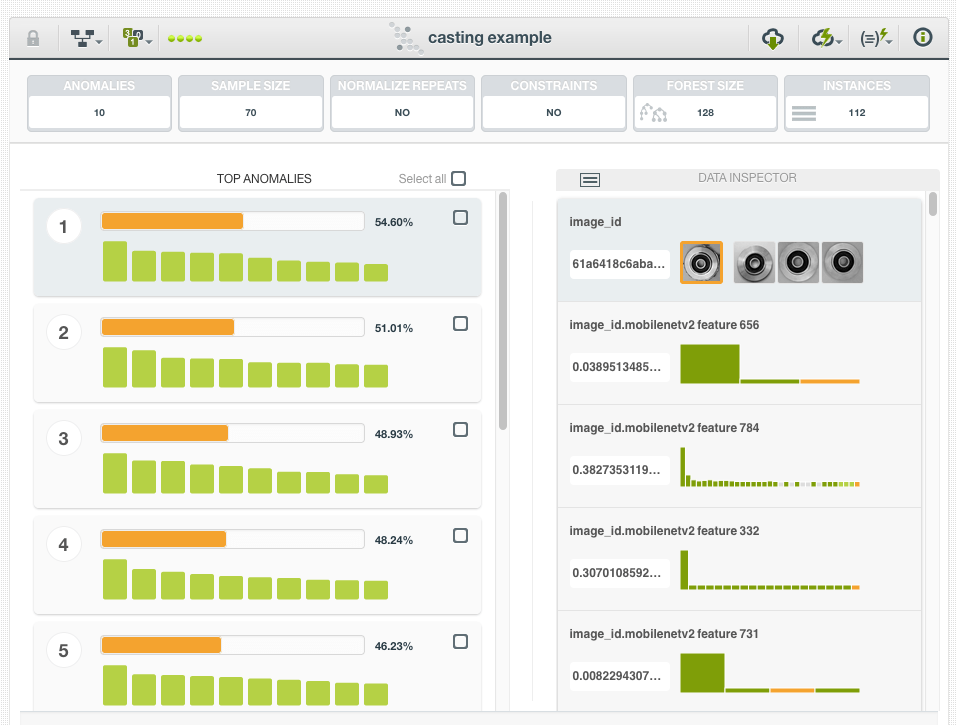

Next up, we just create a 1-Click Anomaly Detector and… voilà! Our 6 defective pump impellers will appear within the Top-7 Anomalies!

Why Unsupervised Learning?

If we tried to solve this problem with a supervised model, we would have to deal with a highly imbalanced dataset. As such, this task would be very time-consuming because trying to create a robust model, without overfitting, from a training dataset that contains only 6 instances from one of its classes is clearly not an easy task.

Additionally, in most manufacturing processes, we may find a wide variety of defects of a different kind, and some of them can be due to faults in machines that we have never seen before. Therefore, we can’t just train a supervised model with historically labeled images of faults.

Unsupervised learning models help us to find patterns in data, even without labels!

Beyond Image Classification and Object Detection

When thinking about Convolutional Neural Networks, people sometimes forget that you can imagine them as a trainable feature extractor plus a classifier/regressor (referring to the readout layer at the end of the network). If you take only the first part, you will have a great tool to transform raw images into the expected tabular form that BigML models expect. After applying the desired feature extractors to our image datasets we have datasets ready to be consumed by any of the supervised and unsupervised models that BigML offers. With this approach, the sky is the limit!

That’s exactly what we have seen in today’s example and there are plenty of use cases where doing unsupervised learning with image extracted features can be the key to solving a real-life business problem. Some fine examples where applying unsupervised learning techniques can have a great impact involve images from security cameras. Using Anomaly Detectors, one can detect uncommon events (e.g., fires, intrusions, etc.). Another example would be Cluster Analysis, which is very useful to find related images so that users can order their personal digital image libraries by grouping related pictures.

Can you imagine more examples like the above? Feel free to share them with us. Or, even better, we encourage you to try them yourself with the BigML platform!

Do you want to Know more about Image Processing?

Please visit the dedicated release page for a gentle introduction to Image Processing and join the FREE live webinar on Wednesday, December 15 at 8:30 AM PST / 10:30 AM CST / 5:30 PM CET. Register today, space is limited!

Finally, stay tuned for the upcoming blog posts about Image Processing with more examples and tutorials on how to use Image Processing through the API, WhizzML, and the Python Bindings, as well as how this new feature has been implemented on the BigML platform.