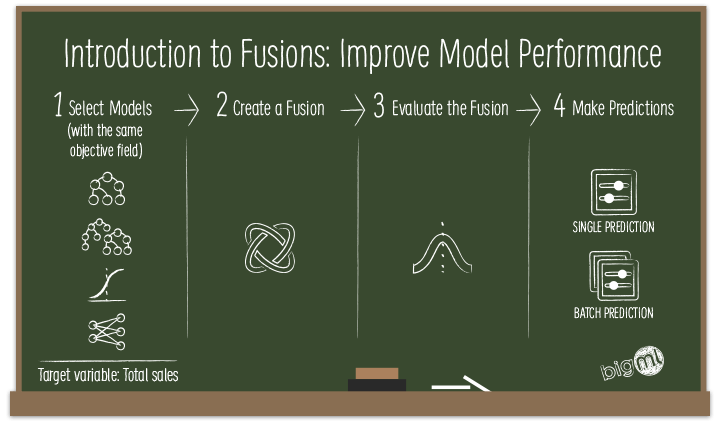

With the upcoming release on Thursday, July 12, BigML keeps pushing the envelope for more people to gain access to and make a bigger impact with Machine Learning. This release features a brand new resource providing a novel way to combine models: BigML Fusions.

In this post, we’ll do a quick introduction to Fusions before we move on to the remainder of our series of 6 blog posts (including this one) to give you a detailed perspective of what’s behind this new capability. Today’s post explains the basic concepts that will be followed by an example use case. This will be followed by three more blog posts focused on how to use Fusions through the BigML Dashboard, API, and WhizzML and Python Bindings in an automated fashion. Finally, we will complete this series of posts with a technical view of how Fusions work behind the scenes.

Understanding BigML Fusions

Classification and regression problems can be solved using multiple Machine Learning methods on BigML, such as models, ensembles, logistic regressions, and deepnets. In previous blog posts, we’ve covered strengths and weaknesses of these resources, e.g, logistic regression tends to perform best when the relationship between input fields and the target variable is linear/smooth in nature, whereas deepnets are capable of handling curvilinear decision boundaries.

A typical Machine Learning project involves multiple iterations. All else being equal, we advocate starting your project with the application of models (aka decision trees) and logistic regressions as they are easy to interpret and tend to train very fast even for large datasets. You may even want to rely on the 1-click modeling options for those to get to acceptable baseline models faster with intelligent default parameters.

Next up, you can try more complex algorithms such as ensembles or deepnets to improve model performance without modifying your dataset or adding new features that may be costly. Each of these algorithms can separately be applied in 1-click, manually configured and automatic optimization (i.e., hyperparameter tuning) mode.

With the most recent release, BigML has gone on to add the OptiML resource, which in a way combines the automatic optimizations for each classification and/or regression algorithm supported by the BigML platform into a single task by using Bayesian Optimization. Combined, these alternatives serve the needs of users from all levels of sophistication with differing model performance and time constraints.

However, in situations requiring you to squeeze out every last ounce of model accuracy from the available data, you may find useful the simple yet effective concept of ensembling different types of Machine Learning models by averaging their predictions to balance out individual weaknesses of any single underlying model. In this regard, Fusions are based on the same “wisdom of the crowd” principle as ensembles under which the combination of multiple models often leads to a stronger performance than any of the individual members.

Of course, there is no guarantee that every time you try a Fusion, you will end up with performance improvements. For better results, the base models each have to be as accurate as possible but ultimately the incremental performance gain results from the diversity of mathematical representations across heterogeneous algorithms. The extent to which the Fusion will enhance performance is use case specific, but given the ease-of-use BigML offers in creating Fusions (either via point-and-click on the Dashboard or the API), one can find some easy pickings fairly quickly. Finally, even in those cases where model accuracy measures show little improvement, you can still benefit from Fusions because they tend to be more stable than single models.

Model Weights

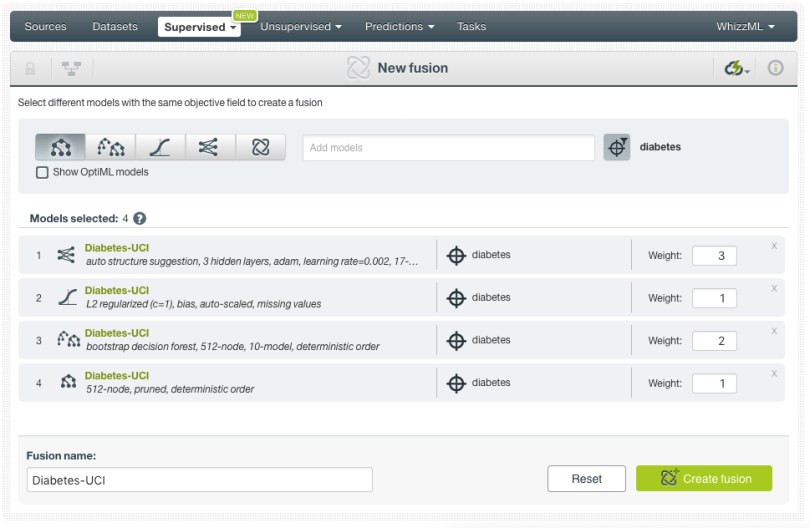

Without going into great detail in this post, when working with Fusions, you can assign different weights to the selected models before creating them. In this case, at the prediction time, BigML will compute a weighted average of all model predictions as per the model weights specified. Therefore, a model with a higher weight will have more influence on the final prediction. That also means if you assign a weight of 0 to a particular model in your Fusion, the results from that model will not be taken into account.

Want to know more about BigML Fusions?

If you have any questions or you would like to learn more about how Fusions work, please visit the release page. It includes a series of blog posts, the BigML Dashboard and API documentation, the webinar slideshow as well as the full webinar recording.