At BigML we’re fond of celebrating every season by launching brand new Machine Learning resources, and our Fall 2016 creation will be headlined by our Latent Dirichlet Allocation (LDA) implementation. After months of meticulous work, BigML’s Development Team is making the LDA primitive available in the Dashboard and API simultaneously under the name of Topic Models. With Topic Models, words in your text data that often occur together are grouped into different “topics”. With the model, you can assign a given instance a score for each topic, which indicates the relevance of that topic to the instance. You can then use these topic scores as input features to train other models, as a starting point for collaborative filtering, or for assessing document similarity, among many other uses.

This post gives you a general overview of how LDA has been integrated into our platform. This will be the first of a series of six posts about Topic Models that will provide you with a gentle introduction to the new resource. First, we’ll get started with Topic Models through the BigML Dashboard. We’ll follow that up with posts on how to apply Topic Models in a real-life use case, how to create Topic Models and make predictions with the API, how to automate this process using WhizzML, and finally a deeper, slightly more technical explanation of what’s going on behind the scenes.

Why implement the Latent Dirichlet Allocation algorithm?

There are plenty of valuable insights hidden in your text data. Plain text data can be very useful for content recommendation, information retrieval tasks, segmenting your data, or training predictive models. The standard “bag of words” analysis BigML performs when it creates your dataset is often useful, but sometimes it doesn’t go far enough as there may be hidden patterns in text data that are difficult to discover when you’re only considering occurrences of a single word at a time. Often, the Latent Dirichlet Allocation algorithm is able to organize your text data in such a way that it causes some of this hidden information to spring to the fore.

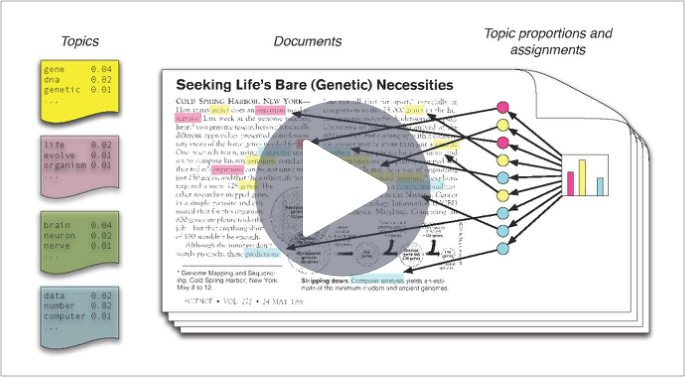

There are three key vocabulary words we need to know when we’re trying to understand the basics of Topic Models: documents, terms, and topics. Latent Dirichlet Allocation (LDA) is an unsupervised learning method that discovers different topics underlying a collection of documents, where each document is a collection of words, or terms. LDA assumes that any document is a combination of one or more topics, and each topic is associated with certain high probability terms.

Here are some additional useful pointers on our Topic Models:

- Topic Models work with text fields only. BigML is able to analyze any type of text in seven different languages: English, Spanish, Catalan, French, Portuguese, German and Dutch.

- Each instance of your dataset will be considered a document (a collection of terms) and the text input field the content of that document.

- A term is a single lexical token (usually one or more words, but can be any arbitrary string).

- A topic is a distribution over terms. Each term has a different probability within a topic: the higher the probability, the more relevant is a term for that topic.

- Several topics may have a high probability associated with the same term. For example, the word “house” may be found in a topic related to properties but also in a topic related to holiday accommodation.

- Each document will have a probability associated with each topic, according to the terms in the document.

Professor David Blei, the inventor of LDA, gives a very nice tutorial on it here:

To use Topic Models, you can specify a number of topics to discover, or let BigML pick a reasonable number of topics according to the amount of training data that you have. Another parameter allows you to also use consecutive pairs of words (bigrams) in addition to single words when fitting the topics. You may also specify case sensitivity. By default, Topic Models automatically discard stop words and high frequency words that occur in almost all of the documents as they typically do not help determine the boundaries between topics. You can also make predictions with your Topic Models both for individual instances and in batch in which case BigML will assign topic probabilities to each instance you provide; the higher the probability, the greater the association between that topic and the given instance.

For instance, imagine that you have a telecommunications company and you want to predict customer churn at the end of the month. For that, we will use all the information available in the customer service data collected when they call or send emails asking for help. Thanks to Topic Models you can automatically organize your data in a way that let’s you define exactly what a client’s correspondence was “about”. Your Topic Model will then return a list of top terms for each topic found in the data. By analyzing your text data you can also use these topics as input features in order to better cluster your customer correspondences into distinct groups you can devise actionable relationship management strategies for. The image below reveals three potential topics that might be extracted from a dataset of such correspondence. One may easily name the first topic “complaints”, the second “technical issues”, and the third “pricing concerns”.

| Topic_1 | Topic_2 | Topic_3 |

| Mistrust | Issue | Tax |

| Tired | Antenna | Cost |

| Terrible | Technical | Free |

| Doubt | Power | Dollars |

| Complaint | Break | Expensive |

| Trouble | Device | Bill |

Want to know all about Topic Models?

Visit the Topic Models release page and check our documentation to create Topic Models, interpret them, and predict with them through the BigML Dashboard and API, as well as the six blog posts of this series.