BigML uses decision trees to find patterns in data that are useful for prediction, and an “ensemble” of multiple trees is a great way to improve these predictions. The process for creating an ensemble is simple: instead of training a single decision tree, you train a bunch of them, each on a randomly sampled subset of your data. Each tree in the bunch then makes its own prediction, and majority vote wins. For example, an ensemble of 10 trees might vote 8 to 2 that a customer is likely to churn, so “churn” wins as the prediction for that customer. (Think of it like Congress, but without the filibuster or Hastert Rule.)

The data for each tree is sampled with replacement, so some data points will appear more than once—and some not at all. For example, if we start with 100 customers in our original data set, then pull from this group 100 times with replacement, the resulting new data set will contain (on average) only 63 of the original customers, and (on average) 37 will not be selected at all. Each time we repeat this process, we get a freshly random batch of 63 people, along with a freshly random group of 37 people who are not selected. (Again, those numbers are averages that will vary from sample to sample.)

This has the benefit of reducing the impact of outliers. Suppose my group of 100 customers contains a single Joker, an outlier that is utterly useless for learning. If I train only a single tree on the entire 100-customer data set, then the Joker can mess up my predictions. But if I use the trick above to create 10 different decision tree models, the Joker now has to clear two hurdles: first, he has to be one of the 63 customers selected for each sample, and second, he has to mess up enough trees to meaningfully alter the majority vote.

This technique—where we sample n times with replacement from an original collection of n data points—is called a bootstrap sample. (If we sample 50 times with replacement from an original data set of 100, that’s very nice, but it ain’t a bootstrap sample.) When we use multiple bootstrap samples to train an ensemble of trees, it’s called bagging, short for “bootstrap aggregating”. BigML uses bagging (and a related approach called random decision forests) to train its ensembles.

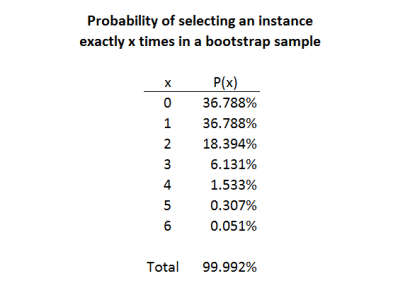

Creating all of these bootstrap samples might sound hard, but there’s actually a ridiculously easy shortcut. Instead of keeping a list of all users in memory so we can pick them out of the metaphorical hat, we just go through the list one by one in a single pass. Starting with user 1, we roll dice to decide how many times (if any) he appears in the bootstrap sample. We do the same thing for user 2, etc., until we have the sample size we want. In 99.99% of cases, a user will show up 6 or fewer times, so we only need to consider seven probabilities: the odds of showing up 0, 1, 2, 3, 4, 5, and 6 times. Even better, these numbers are easy to compute: the probability of showing up exactly x times is simply 1/(e * x!). This formula assumes a data set that is infinitely large, but fortunately these percentages are useful for data sets as small as 100 instances.

So without further ado, here are the seven magic numbers for creating a bootstrap sample in just one pass:

Some interesting things about this table:

- As mentioned above, the probability of a data point showing up at least once is (1 – 0.368) = 0.632.

- The odds of not getting picked are the same as the odds of getting picked exactly once. In both cases, the probability is 0.368, or 1/e.

- These probabilities sum up to more than 99.99%, so we really do get away with ignoring cases where a data point is picked more than 6 times.

(Huge thanks to BigML’s Adam Ashenfelter for this awesome blog post about one-pass sampling with replacement.)