Nowadays, many knowledge workers have access to lots of data that can be analyzed to extract interesting insights. This has brought people with different profiles and skill sets to explore Machine Learning. However, this data deluge can also be likened to a deep forest hiding the golden tree. Generating a large number of features to solve a Machine Learning problem can be a very time and resource-intensive affair. One can easily get lost in the complexity. How does one tell which features to use and which ones to spare then? Fortunately, machines can also help extract relevant features to our problem while discarding the ones that don’t add value. This process is known as automated feature selection.

An example of feature selection: The Boruta package

Different methods can be used to automatically select the significant features for a classification or regression problem. In this post we’ll follow the idea implemented in the Boruta package, written in R. In short, this method takes advantage of random decision forests and uses the field importance derived from the random decision forest splits to decide which features are really relevant.

A random decision forest is an ensemble of decision trees, each of which is built from a random sample of your data. The random decision forest predictions are then computed by majority vote or average of the individual model predictions. The sampling to build the trees is twofold: it picks a random subset of the available rows for each tree as well as considering a random subset of the available features for each split. This procedure makes random decision forests quite powerful compared to other Machine Learning methods as the ensemble is able to generalize well when facing entirely new input data.

Let’s dig into what the feature selection algorithm in the Boruta package does:

- Adds to the original dataset a new feature per column (a shadow feature), except for the one that you want to predict. The new feature will contain values of the original feature chosen at random. Thus, these values will have no correlation with the target value to be predicted.

- Creates a random decision forest using the extended dataset. As a result, we get the importance that each feature has when it is used to split the data.

- Compares the importance of the original features to the maximum of the importance for the new shadow features, which acts as a threshold of what level of importance can be achieved just randomly.

- Detects the features whose importance is above and below that threshold. Those below the threshold are considered unimportant and removed from the original dataset.

- The new reduced dataset goes again through these steps until all features are tagged as important or unimportant (or a maximum number of runs is reached).

So here random decision forests are used as a mechanism to reduce the dimensions you need to cope with in your problem in a quick and effective way. You may be thinking that methods like this are only available for programmers or that they require a lot of expertise to be properly used. Well, here’s where BigML comes to the rescue.

Boruta feature selection for non-programmers

For those who are not interested in the implementation details or don’t have the programming skills to dive into scripts and libraries, we’ve created a script in BigML, which mimics the procedure used in the Boruta package. The good news is that this script is available in the scripts gallery and you just need to clone it and add it to your BigML Dashboard menu as a “one-click action”. From then on, you’ll be able to create a new filtered dataset that automatically excludes all the unimportant features in a single click!

This will certainly:

- reduce both the size and elapsed time of the Machine Learning tasks that you want to perform next up e.g., creating new model iterations.

- clarify the relationships between the remaining features and your target variable (i.e., the objective field to be predicted).

- probably reduce also the times and costs involved in data acquisition overall.

If you think this would be a handy addition to your Machine Learning arsenal, just:

- go to the Boruta 1-click Feature Selection script in BigML’s gallery.

- add it to your scripts list

- add it to the datasets menu as a 1-click action.

And voila, Boruta feature selection has now become one of your 1-click menu options! Note: you don’t need to read the rest of this post or bother about the programming details if you are happy with what we have gone over thus far. If your curiosity is not yet fully quenched, keep reading.

Peeking behind the scene: WhizzML

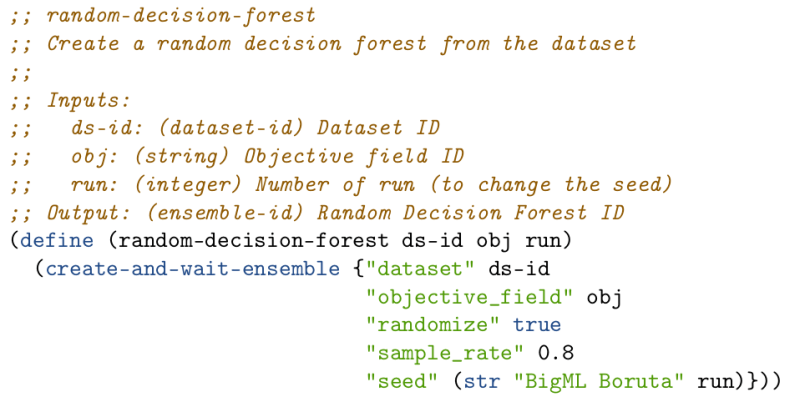

For those of you that are interested in the implementation details, this script has been built by using WhizzML. WhizzML is the domain specific language that BigML recently added to the platform. In WhizzML, all Machine Learning tasks and resources are first-class citizens. Here’s an example from the Boruta feature selection script. When you need to create the random decision forest, you just use a standard library function to create ensembles by providing the configuration parameters: the dataset ID you start from and the field that you want to predict (the objective field in BigML’s language).

The lines prefixed with ;; are comments and (define ...) is the directive, which defines a function or a variable in WhizzML. So here we define the random-decision-forest function. The body of this function is calling the create-and-wait-ensemble standard library function, which needs a map of arguments to create the random decision forest ensemble. The map is written as an alternating list of keys and their associated values. The first pairs in the map of arguments are the dataset ID and the objective field. The remaining attributes are used to define the sampling settings in the random decision forest. You can learn more about the available arguments in the API Documentation for Developers.

Another key step in the algorithm is transforming the original dataset by extending the number of features with a shadow field per feature. You can think that this will be the tricky bit, because you must randomly select values of the original field to fill the new one. Flatline, BigML‘s language for dataset transformations comes in handy here. Flatline offers weighted-random-value, which returns a value in the range of a given field while maintaining its distribution of values. Thus, by combining WhizzML and Flatline, creating a new extended dataset boils down to just two lines of code:

In the first line, the new-fields structure needed for the dataset transformation is created (you can learn more about that in the API Documentation for Developers). We set the new field names by prepending "shadow " to the original ones, and create their corresponding value using the flatline WhizzML function. Finally, the create-and-wait-dataset WhizzML function of the standard library is used to handle the new dataset creation.

The rest of the code is basically managing input and output formats, and handling iterations with a loop WhizzML structure. In a handful of lines of code, we’ve been able to implement the powerful Boruta feature selection algorithm in a parallelized, scalable and distributed fashion such that even non-programmers can adopt it. That’s what we call democratizing Machine Learning. Now it’s your turn to make the best of it for your next Machine Learning project!

One comment