In the first part of this series, we saw how to insert machine-learned model predictions into a webpage on-the-fly using a content script browser extension which grabs the relevant pieces of the webpage and feeds them into a BigML actionable model. At the conclusion of that post, I noted that actionable models were very straightforward to use, but their static nature would lead to lots of effort in maintaining the browser extension if the underlying model were constantly updated with new data. In this post, we’ll see how to use BigML’s powerful API to both keep your models up to date, and write a browser extension that stays current with your latest models.

Staying up to date

In keeping with our previous post, we will be working on a model to predict the repayment status of loans on the microfinance site Kiva. In the last post, we used a model that was trained nearly one year ago on a snapshot of the Kiva database. Hundreds of new loans become available every day, which means this model is starting to look a bit long in the tooth. We’ll start off by learning a fresh model from the latest Kiva database snapshot, and in doing so, we’ll get to use some of the new features we’ve added to BigML over the last year, such as objective weighting and text analysis. However, the Kiva database snapshot is several gigabytes of JSON-encoded data, much of which won’t be relevant to our model, so we really don’t want to relearn from a new snapshot several months down the line when our hot new model becomes stale. Fortunately, we can avoid this situation using multi-datasets. Periodically, say once a month, we’ll grab the newest loan data from Kiva and build a BigML dataset. With multi-datasets, we can take our monthly dataset, our original snapshot dataset, and all the previous monthly datasets, concatenate them together and learn a model from the whole thing. With the BigML API, this all happens behind the scenes, and all we need to do is supply a list of the individual datasets. We can do all of this with a little Python script. Here is the main loop:

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| KIVA_SNAPSHOT_URL = 'http://s3.kiva.org/snapshots/kiva_ds_json.zip' | |

| PRUNE_FIELDS = ['terms', 'payments', 'basket_amount', 'description', 'name', | |

| 'borrowers', 'translator', 'video', 'image', | |

| 'funded_date', 'paid_date', 'paid_amount', 'funded_amount', | |

| 'planned_expiration_date', 'bonus_credit_eligibility', | |

| 'partner_id'] | |

| KEYS = ['sector', 'use', 'posted_date', 'location.country', | |

| 'journal_totals.entries', 'activity', | |

| 'loan_amount', 'status', 'lender_count'] | |

| INPUT_FIELD_IDS = ['000000', # sector | |

| '000001', # use | |

| '000003', # location.country | |

| '000004', # journal_totals.entries | |

| '000005', # activity | |

| '000006', # loan_amount | |

| '000008', # lender_count | |

| '000002-0', | |

| '000002-1', | |

| '000002-2', | |

| '000002-3', | |

| # posted-date.{year,month,day-of-month,day-of-week,'hour'} | |

| '000002-4'] | |

| EXCLUDED_FIELD_IDS = ['000002', '000002-5', '000002-6'] | |

| OBJECTIVE_FIELD_ID = '000007' # status | |

| if __name__ == '__main__': | |

| api = BigML() | |

| datasets = api.list_datasets("name=kiva-data;order_by=created")['objects'] | |

| if len(datasets) == 0: | |

| # no pre-existing data, create from Kiva snapshot | |

| # download kiva snapshot to tmp file | |

| make_ds_from_snapshot() | |

| else: | |

| # find date of most recent BigML dataset | |

| last_date = dateutil.parser.parse( | |

| datasets[0]['created']).replace(tzinfo=dateutil.tz.tzutc()) | |

| # use kiva api to grab new loan data | |

| make_ds_from_api(last_date) | |

| print 'building model' | |

| datasets = api.list_datasets("name=kiva-data;order_by=created")['objects'] | |

| model = api.create_model([obj['resource'] for obj in datasets], | |

| {'name': 'kiva-model', | |

| 'objective_field': OBJECTIVE_FIELD_ID, | |

| 'input_fields': INPUT_FIELD_IDS, | |

| 'excluded_fields': EXCLUDED_FIELD_IDS, | |

| 'balance_objective': True}) | |

| print 'Done!' |

Every dataset created by this script will have “kiva-data” as its name, so we can use the BigML API to list the available datasets and filter by that name. If none show up, then we know we need to create a base dataset from a Kiva snapshot; otherwise, we’ll use the Kiva API to create an incremental update dataset. In either case, we then proceed to create a new model using multi-datasets. All we need to do is pass a list of our desired dataset resource IDs. We assign the name “kiva-model” to the model so that it can be easily found by our browser extension. We employ a few other minor tricks in our script, such as avoiding throttling in the Kiva API. You can check out the whole thing at this handy git repo.

API-powered browser extension

Our first browser extension was a content script that fired whenever we navigated to a Kiva loan page. It would grab the loan information, feed it to the model, and then use jQuery to insert a status indicator into the webpage’s DOM tree. Our new extension won’t be very different with regards to loan data scraping and DOM manipulation; the main difference will be how the model predictions are generated. Whereas our first extension featured an actionable model, i.e. a series of nested if-else statements, our new extension will perform predictions with a live BigML model, using the REST-ful API. To interface with the model, our extension now needs to know about our BigML credentials. In order to store the BigML credentials, we create a simple configuration page for the extension, consisting of two input boxes and a Save button.

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| <!DOCTYPE html> | |

| <html> | |

| <head><title>Kiva Predictor Options</title></head> | |

| <body> | |

| <label> | |

| BigML Username: | |

| <input type="text" id="username"> | |

| </label> | |

| <br> | |

| <label> | |

| BigML API Key: | |

| <input type="text" id="apikey"> | |

| </label> | |

| <div id="status"></div> | |

| <button id="save">Save</button> | |

| <script src="options.js"></script> | |

| </body> | |

| </html> |

Behind the scenes, we have a little bit of JavaScript to store and fetch our credentials using the chrome.storage API.

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| // Saves options to chrome.storage | |

| function save_options() { | |

| var username = document.getElementById('username').value; | |

| var apikey = document.getElementById('apikey').value; | |

| chrome.storage.sync.set({ | |

| username: username, | |

| apikey: apikey | |

| }, function() { | |

| // Update status to let user know options were saved. | |

| chrome.runtime.sendMessage({greeting:"fetchmodel"}) ; | |

| var status = document.getElementById('status'); | |

| status.textContent = 'Options saved.'; | |

| setTimeout(function() { | |

| status.textContent = ''; | |

| }, 750); | |

| }); | |

| } | |

| // Restores select box and checkbox state using the preferences | |

| // stored in chrome.storage. | |

| function restore_options() { | |

| // Use default value color = 'red' and likesColor = true. | |

| chrome.storage.sync.get({ | |

| username: 'BigML Username', | |

| apikey: 'BigML API Key' | |

| }, function(items) { | |

| document.getElementById('username').value = items.username; | |

| document.getElementById('apikey').value = items.apikey; | |

| }); | |

| } | |

| document.addEventListener('DOMContentLoaded', restore_options); | |

| document.getElementById('save').addEventListener('click', | |

| save_options); |

Whenever we save a new set of credentials, we want to lookup the most recent Kiva loans model found in that BigML account. However, this isn’t the only context in which we want to fetch models. For instance, it would be a good idea to get the latest model every time the web browser is started up. We’ll implement our model fetching procedure inside an event page, so it can be accessed from any part of the extension.

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| function fetchModel(){ | |

| chrome.storage.sync.get({ | |

| username: "BigML Username", | |

| apikey: "BigML API Key" | |

| }, | |

| function(items){ | |

| var models_url = "https://bigml.io/andromeda/model?name=kiva-model;username=" + items.username + ";api_key=" + items.apikey ; | |

| var xmlHttp = new XMLHttpRequest() ; | |

| xmlHttp.open("GET",models_url,false) ; | |

| xmlHttp.send(null) ; | |

| if (xmlHttp.status == 200){ | |

| var resp = JSON.parse(xmlHttp.responseText) ; | |

| var model = resp.objects[0].resource ; | |

| chrome.storage.sync.set({model:model}) | |

| } | |

| else{ | |

| alert("Could not list BigML models, your credentials may be incorrect.") | |

| chrome.tabs.query({title:"Kiva Predictor Options"},function(result){ | |

| if (result.length == 0){ | |

| chrome.tabs.create({url:"options.html"}) | |

| } | |

| }) ; | |

| } | |

| }) | |

| } | |

| function firstRun(){ | |

| chrome.tabs.create({url:"options.html"}) | |

| } | |

| chrome.runtime.onInstalled.addListener(firstRun) ; | |

| chrome.runtime.onStartup.addListener(fetchModel) ; | |

| chrome.runtime.onMessage.addListener(function(request,sender,sendResponse){ | |

| if (request.greeting == "fetchmodel"){ | |

| fetchModel() ; | |

| } | |

| }) | |

At the bottom of event page, we see that it listens to the browser startup event, and any messages with the greeting “fetchmodel” to fire the model fetching procedure. We also see that it listens for the onInstalled to open up the configuration page for the first time. With this extra infrastructure in place, we are ready to make our modifications to the content script. Here is the script in its entirety:

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| // check if a loan ID is at the end of the url | |

| var re = /lend\/(\d+)/ ; | |

| var result = re.exec(window.location.href) ; | |

| var predict_url = "" ; | |

| var model = "" | |

| var global = null ; | |

| // get stored bigml auth parameters and build prediction url | |

| chrome.storage.sync.get(['model','username','apikey'],function(items){ | |

| predict_url = "https://bigml.io/andromeda/prediction?username=" + items.username + ";api_key=" + items.apikey ; | |

| model = items.model ; | |

| }) ; | |

| if (result !== null){ | |

| // individual loan page | |

| var loan_id = result[1] ; | |

| var container = $("<div></div>") | |

| container.addClass("prediction-container") ; | |

| makeStatusIndicator(loan_id,container) ; | |

| $("#lendFormWrapper").after(container) ; | |

| }else{ | |

| // list of loans | |

| var ids = $("a.borrowerName").attr("href") | |

| //makeStatusIndicator(loan_id,$("article.borrowerQuickLook")) ; | |

| $("article.borrowerQuickLook").each(function(idx,element){ | |

| var result = re.exec($(this).find("a.borrowerName").attr("href")) ; | |

| var loan_id = result[1] ; | |

| var container = $("<div></div>") | |

| .addClass("prediction-container small-container") ; | |

| makeStatusIndicator(loan_id,container) ; | |

| $(this).find("div.fundAction").append(container) | |

| }) ; | |

| } | |

| function makeStatusIndicator(loan_id,container){ | |

| // this function executes the Kiva API query for a particular loan id, | |

| // and passes the returned value to the BigML API for prediction | |

| container.append("<span class='placeholder'>Predicting loan status…</span>") | |

| // Kiva API call | |

| var url = "http://api.kivaws.org/v1/loans/" + loan_id + ".json" ; | |

| $.get(url,function(data){ | |

| // pass response to BigML API | |

| var data = data.loans[0] ; | |

| predictStatus(data,container) ; | |

| }) | |

| } | |

| function predictStatus(data,container){ | |

| // construct input data structure | |

| var posted_date = new Date(data.posted_date) ; | |

| // Javascript days are 0-6 <=> Sun-Sat, but BigML days are 1-7 <=> Mon-Sun | |

| var day_of_week = posted_date.getDay() | |

| if (day_of_week == 0){ | |

| day_of_week = 7 ; | |

| } | |

| var input_data = { | |

| "000000":data.sector, | |

| "000001":data.use, | |

| "000003":data.location.country, | |

| "000004":data.journal_totals.entries, | |

| "000005":data.activity, | |

| "000006":data.loan_amount, | |

| "000008":data.lender_count, | |

| "000006":data.loan_amount, | |

| "000002-0": posted_date.getFullYear(), | |

| "000002-1": posted_date.getMonth()+1, | |

| "000002-2": posted_date.getDate(), | |

| "000002-3": day_of_week, | |

| "000002-4": posted_date.getHours() | |

| } | |

| var post_data = JSON.stringify({ | |

| input_data:input_data, | |

| model:model | |

| }) ; | |

| console.log(post_data) ; | |

| console.log(predict_url) ; | |

| var req = new XMLHttpRequest ; | |

| req.open('post',predict_url) ; | |

| req.setRequestHeader('Content-Type','application/json') ; | |

| req.onload = function(evt){ | |

| if (req.readyState === 4){ | |

| if (req.status === 201){ | |

| var resp = JSON.parse(req.responseText) | |

| var status = resp["prediction"]["000007"] | |

| container.children(".placeholder").remove() | |

| // create DOM object for icon | |

| var status_span = $('<span>Predicted Status: </span>') | |

| var img = $('<img class="indicator">') ; | |

| // create the indicator. use chrome.extension.getURL to resolve path to image resource | |

| if (status == "paid"){ | |

| img.attr("src",chrome.extension.getURL("images/green_light.png")) ; | |

| }else{ | |

| img.attr("src",chrome.extension.getURL("images/red_light.png")) ; | |

| } | |

| img.attr("title","The predicted status for this loan is: " + status.toUpperCase()) ; | |

| status_span.append(img) ; | |

| container.append(status_span) ; | |

| // create confidence meter | |

| meter_span = $('<span></span>') | |

| meter_span.addClass("label") ; | |

| var confidence = Math.floor(Number(resp["confidence"])*100) ; | |

| meter_span.text("Prediction Confidence: ") | |

| var meter = $('<div class="meter confidence-meter">') | |

| var bar = $('<div class="confidence-bar">') ; | |

| bar.css("width",confidence +"%") | |

| if (confidence > 66){ | |

| bar.addClass("confidence-high") ; | |

| } else if (confidence > 33 ) { | |

| bar.addClass("confidence-mid") ; | |

| } else { | |

| bar.addClass("confidence-low") ; | |

| } | |

| bar.attr("title",confidence+"%") ; | |

| meter.append(bar) ; | |

| container.append("<br>").append(meter_span) ; | |

| container.append(meter); | |

| // create link to BigML prediction page | |

| var resource = resp["resource"] | |

| var prediction_link = $('<a>Details</a>') | |

| prediction_link.attr("href","https://bigml.com/dashboard/"+resource) | |

| .attr("target","_blank") ; | |

| container.append("<br>").append(prediction_link) ; | |

| } | |

| } | |

| } | |

| req.send(post_data) ; | |

| return | |

| } |

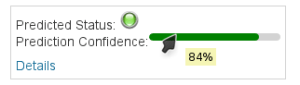

Compared to the previous version of the extension, the bulk of the differences lie in the predictStatus function. Rather than evaluating a hard-coded nested if-then-else structure, the loan data is POSTed to the BigML prediction URL with AJAX, and most of the DOM manipulation has been refactored to occur in the AJAX callback function. One perk we get from using a live model is that we get a confidence value along with our prediction of the loan status. We’ve used that to add a nice little meter alongside our status indicator icon.

Better browsing through big data

You can grab the source code for this Chrome browser extension here, where it is also available as a Greasemonkey user script for Firefox. We hope that this post, and its prequel, have been able to show the relative ease with which BigML models can be incorporated into content scripts and browser extensions. Beyond this kiva.org example, there are a myriad of potential applications on the internet where machine learned models can be used to provide a richer and more informed web browsing experience. It’s now up to you to use what you’ve learned here to go out and realize those applications!