Slowly, machine learning has been creeping across the software world, especially in the last 5-10 years. Time was, only huge companies could leverage it, making sense out of data that would be useless to their smaller counterparts. Thanks to BigML, a number of other companies, and some great open source projects, machine learning is now democratized. Now nearly anyone, regardless of technical ability, can use machine learning at a fraction of the cost of a single software engineer.

But when I say “machine learning” above, I’m really only talking about supervised classification and regression. While incredibly useful and perhaps the easiest facet of machine learning to understand, it is a very small part of the field. Some companies (including BigML) know this, and have begun to make forays just slightly further into the machine learning wilderness. With supervised classification becoming a larger and larger part of the public consciousness, it’s natural to wonder where the next destination will be.

Here, I’ll speculate on three directions that machine learning commercialization could take in the near future. To one degree or another, there are probably people working on all of these ideas right now, so don’t be surprised to see them at an internet near you quite soon.

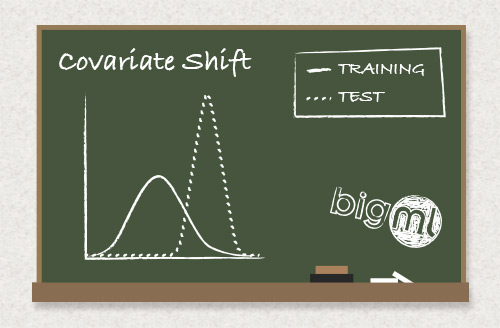

Concept Drift or Covariate Shift: You’re Living in the Past, Man!

One problem with machine-learned models is that, in a sense, they’re already out-of-date before you even use them. Models are (by necessity) trained and tested on data that already happened. It does us no good to have accurate predictive analytics on data from the past!

The big bet, of course, is that data from the future looks enough like data from the past that the model will perform usefully on it as well. But, given a long enough future, that bet is bound to be a losing one. The world (or, in data science parlance, the distribution generating the data) will not stand still simply because that would be convenient for your model. It will change, and probably only after your model has been performing as expected for some time. You will be caught of guard! You and your model are doomed!

Okay, slow down. There’s a lot of research into this very problem, with various closely related versions labeled “concept drift”, “covariate shift”, and “dataset shift”. There is a fairly simple, if imperfect, solution to this problem: Every time you make a prediction on some data, file it away in your memory. When you find yourself making way more bad predictions than you’d normally expect, recall that memorized data and construct a new model based on it. Use this model going forward.

Essentially the only subtlety in that plan is the bit about “more bad predictions that you’d normally expect”. How do you know how many you’d expect? How many more is “way more”? It turns out that you can figure this out, but it often involves some fancy math.

We’ve already done some writing about this, and we’re working hard to make this one a reality sooner rather than later. Readers here will know the moment it happens!

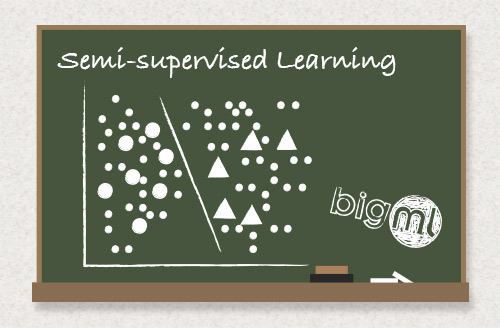

Semi-supervised Learning: What You Don’t Know Can Hurt You

Supervised classification is great when you have a bunch of data points, already nicely labeled with the proper objective. But what if you don’t have those labels? Sometimes, getting the data can be cheap but labeling it can be expensive. A nice example of this is image labeling: We can collect loads of images from the internet with simple searching and scraping, but labeling them means a person looking at every single one. Who wants to pay someone to do that?

Enter semi-supervised learning. In semi-supervised learning, we generally imagine that we have a small number of labeled points and a huge number of unlabeled points. We then use fancy algorithms to infer labels for the remainder of the data. We can then, if we like, learn a new model over the now-completely labeled data. The extension from the purely supervised case is fairly clear: One need only provide an interface where a user can upload a vast number of training points and a mechanism to hand-label a few of them.

Perhaps even more interesting is the special case provided by co-training, where two separate datasets containing different views of the data are uploaded. The differing views must be taken from different sources of information. The canonical example of this is webpages, where one view of the data is the text of the page and the other is the text of the links from other pages to that page. Another good example may be cellphones, where one view is the accelerometer data and another view is the GPS data.

With these two views and a small number of labels, we learn separate classifiers in each view. Then, iteratively, each classifier is used to provide more data for the other: First, the classifier from view one finds some points for which it knows the correct class with high confidence. These high confidence points are added to the labeled set from view two, and that classifier is retrained. The process then operates in reverse, and the loop is continued until all points are labeled. The idea is fairly simple, but performs very well in practice, and is probably the best choice for semi-supervised learning in the case where one has two views of the data.

Reinforcement Learning: The Carrot and The Stick

Further from traditional supervised learning is reinforcement learning. The reinforcement learning problem sounds similar to the classification problem: We are trying to learn a mapping form states to actions, much as in the supervised learning case where we’re learning a mapping from points to labels. The main thing that changes the flavor of the problem is that it has much more the feel of a game: When we take an action we occasionally get a reward or punishment, depending on the state. Also, the action we take determines the next state in which we find ourselves.

A nice example of this sort of problem is the game of chess: The state of the game is the various locations of the pieces on the board, and the action you take is to move a piece (and thus change the state). The reward or punishment comes at the end of the game, when you win or lose. The main cleverness is to figure out how that reward or punishment should modify how you select your actions. More precisely, which thing or things that you did led to your eventual loss or victory?

Because this learning setting has such a game-like feel, it might not be surprising that it can be used to learn how to play games. Backgammon and checkers have turned out to be particularly amenable to this type of learning.

So What’s It Going To Be?

It’s difficult to say if any of these things or something else entirely will be The Next Big Thing in commercial machine learning. A lot of that depends on the cleverness of people with problems and if they can find ways to solve their problems with these tools. Many of these technologies don’t yet have broad traction yet simply because . . . they don’t have broad traction yet (no, I’m not in the tautology club). Once a few pioneers have shown how such things can be used, lots of other people will see the light. I’m reminded of a story about the first laser, long before fiberoptic cables and corrective eye surgery, when an early pioneer said that lasers were “a solution looking for a problem“. There are plenty of technologies like these in the machine learning literature; great answers looking for the right question, waiting for someone to match them up.

Got other ideas about what machine learning technology should be democratized next? We’d love to hear about it in the comments below!

Great high level description of these tools!