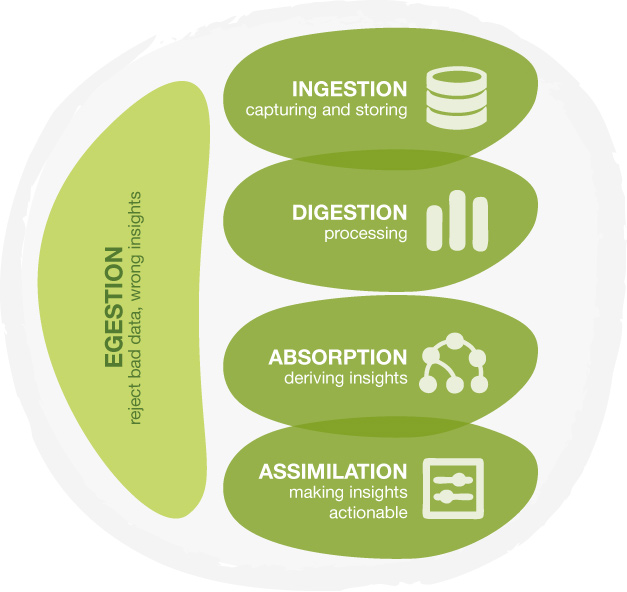

A previous post compared working with data to a digestive system. We think that the Big Data technology in Ingestion and Digestion (capturing data and processing data) is hyped at the expense of Absorption and Assimilation (creating new insights and putting them to action). Aquiring a new tool, installing it on your hardware and learning how to run it may take months. Even though some vendors claim you can get new insights within hours, setting up a Hadoop cluster and installing everything will take quite a bit more than that. What you really want is to get to the insights as soon as possible and put them to action. Then validate the results and work your way back to technology for a more robust implementation if needed. How? That’s what this post is about.

8 Steps to Get Started.

Here’s our 8 simple steps to get started with Big (or small) Data.

- Start with a small data sample. You don’t need the full width and depth of your data to find interesting stuff. Start small. It saves you a lot of technology headache at the start.

- Use BigML or any other simple to use tool to build an initial predictive model that you can understand and quickly integrate. Check this series of blog posts comparing some SaaS machine learning offerings. The important words here are simple, actionable and understandable. You don’t want to waste too much time figuring out how to use a tool. Neither do you want to lose time translating and coding the outcomes. And you want to understand the outcomes so you can execute step three.

- Check if the model gives you any practical insights. Explore the model. Find it’s gold. Or not and discard it.

- Use the model to generate predictions and see if it can improve your company’s performance. Put the model to action. Find a playground in your company to take a test and measure the changes in churn, conversion, risk or whatever you modeled.

- Check how more data can improve the model. You can add data in two ways: simply add more datapoints to the same dataset. Or you can add more features to the dataset, new pieces of information, to enhance the model and find new relationships with possibly better performance. In spreadsheet terms: you can add more rows or more columns.

- Check if this more sophisticated model beats the previous model. Again: put it to action and see how it performs. Does it improve the previous results?

- Iterate. The secret is to try multiple models to see which one gives the best results at this point in time. Continue to iterate to find the best fit.

- Now check the technology concept suitable for your situation. Now that you’ve seen some successful implementations of predictive models, you’re much better equipped to evaluate various vendor’s offerings. You have experienced how a cloud-based service saves you the annual license fees, investment in hardware and training etc. You can compare that to the more traditional on site implementations and pick which concept best fits your needs and budget.

Actionable analytics.

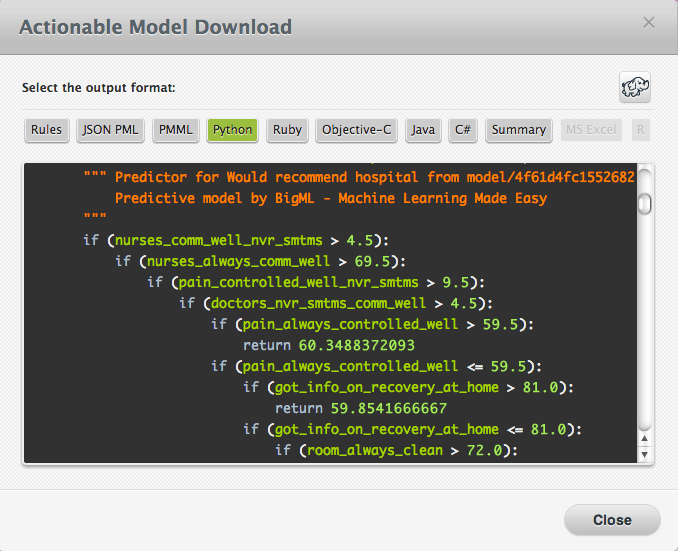

Central in this approach is your ability to easily create various models and put them to work immediately. At BigML we have created both facets. Your model is made in just a few clicks. And connecting to our platform to run predictions was already very simple through the API. Recently we’ve put some effort in making your models downloadable, so you can run them on your own environment. It is as simple as clicking the download icon, select the language you need and then copy/paste the generated code. We even added a little elephant button. This will activate a special Hadoop version of the download (available for some of the languages): the code is split in a mapper and a reducer and ready to deploy in your Hadoop environment.

Get started.

All the pieces are available, usually at little to no cost. Registering at BigML is done in a minute, doesn’t require you to leave any personal details and will get you up to 50MB of free modeling credits. All you need is some awesome data. Why don’t you get started?

Great post! Technically the steps you identify are the correct ones. But in order to be effective in an organization, I believe some other things need to be considered as well:

– The steps only have an impact on the organization if carried out by the appropriate person (someone that the organization listens to, or that engages with the right stakeholders). Otherwise the great results will be there, but nobody cares.

– Using your tool will move data out of an organization into the Cloud. And this might cause problems, especially if the cloud is from a small start-up, probably running on Amazon or similar. Anonimization of the data in advance is advisable (see this great new publication http://www.ico.gov.uk/for_organisations/data_protection/topic_guides/anonymisation.aspx)

– You position the tool as a quick way to start without investments somewhat at the expense of being the final solution.

Regards

Thanks Richard.

Your first and second item are certainly true. I do hope, however, that if someone comes with good results that can improve the performance of a business, he’ll find a listening ear. But reality has been known to be different.

As for data protection: indeed we have had numerous discussions with companies on that topic. Our response usually is that the best way to safe guard anonymity is to not use personally identifiable data. In the examples I have seen, personally identifiable data was never relevant. One never uses names, id’s, addresses, account number etc. in a predictive analysis. Nevertheless, it is a barrier companies have to take.

As for your third comment: we predict that when users have had a taste of BigML, the likelihood of moving to an expensive, on site, licensed product is low. But I’ll happily take your comment to make it more explicit: BigML will suit your needs very nicely, not only as a starting point but also as a final solution!

Saw you comment on VentureBeat. It is great to see people explaining big data for what it really is…an ecosystem of capabilities.

Thanks Chris!