The 94th Academy Awards ceremony is a wrap and thanks to an unexpected turn of events it seems to have the distinction of being dubbed as the most chaotic of them all. The night began with all the familiar signs of what one would expect from a non-pandemic Oscars proceedings with the celebrities streaming in, the red carpet interviews, and the inevitable opening salvos from the night’s hosts. But then, suddenly, it took a turn for the worse when Chris Rock took the stage for a serving of his no-holds-barred celebrity jokes, except this time Will Smith responded with a “service return” of his own that will perhaps forever be remembered as the defining moment of this year’s Academy Awards. While we hope that tempers will fade in the coming days, we’d like to quietly jump into the movies themselves and how we did with our predictions this year.

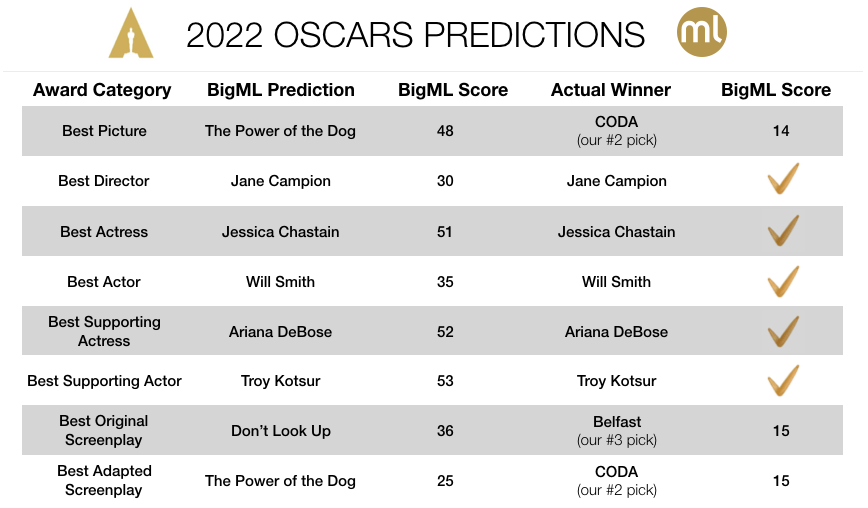

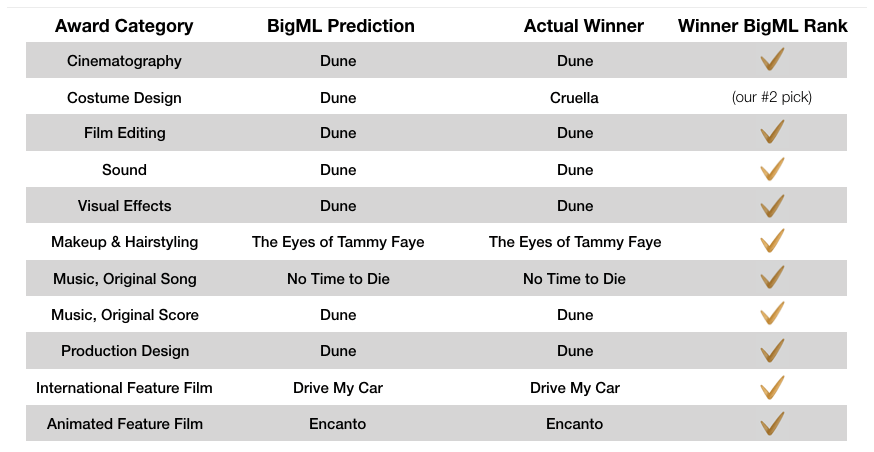

With modifications to our data and approach, this year’s prediction challenge turned out a fruitful one as we had 15 out of 19 predictions correct for a pretty decent hit rate of 79%! In fact, we’ve never had 15 right picks before as there were some question marks as to whether some of the more technical categories we chose to add for the first time would prove predictable. Although a single year can be misleading, we have a good reason to continue including the newly added categories going forward.

As usual, we have to remind ourselves that getting 15 out of 19 right with anywhere between 5 to 10 nominees for each category would be an exercise in futility if we were to engage in the random act of flipping coins instead. Basically, we’re talking about finding the one correct combination out of 30,517,600,000 possible combinations. As the tables below summarize, armed with a spreadsheet full of data, Machine Learning does a remarkable job of almost fully solving this complex puzzle despite having no preconceived notion of what a movie is!

Analysis and Lessons Learned

We found that we could have achieved even better results if we stuck to averaging the Top 10 OptiML models which would yield 17 hits out of 19 for a hit rate of almost 90%. Only the Best Picture and the Best Original Screenplay results would be incorrect while Best Adapted Screenplay and Best Costume misses would be reversed. Considering how easy it is to run an OptiML model search (it’s really one single click folks) once you have created a dataset, we can truly talk about bringing Machine Learning to the masses.

It’s also noteworthy that the good old Logistic Regression models made up a good part of the top-performing OptiML models, which tells us complexity doesn’t always add value when it comes to Machine Learning. Simply aim to shoot for what works. Interestingly, this is an ironic pattern we see quite often in AI/ML-type projects in the enterprise setting, where data scientists and ML specialists sometimes unnecessarily complicate things. Note that our method for making predictions is transparent and documented for all 19 categories, which has added value of its own. In the same vein, organizations must decide for themselves where they should stand given the accuracy, complexity, risk, time-to-market, and cost tradeoff spectrums. Our experience since 2011 has shown investing in data quality and data engineering are the smart choices to overcome bottlenecks while simultaneously relying on the select batch of best-in-class methods the Machine Learning community has been able to collectively produce since the 1980s.

CODA was clearly the feel-good picture of the year and it indeed delivered a storybook ending to its eventful journey capped with the BEST PICTURE OSCAR! The fact that this smallish independent film was acquired by the media heavyweight Apple for a hefty sum elevated its exposure to a worldwide level carrying its campaign to the winner’s circle while marking the arrival of this new distribution model for the movie industry as one that’s likely here to stay. We will probably see more CODAs being scooped up by streaming giants and plugged into their vast network effects and audiences.

As was the case in prior years, the Screenplay categories proved highly competitive. Perhaps, there’s something to say about the story itself – the most basic element of any film proving the most equal competition among nominees before production budgets, casting, and an unending array of technical talent add whole new dimensions to the final equation of competitive differentiation. We’ll look for new angles to tackle these categories in the next year.

This year, since we opened up our Oscars Prediction Challenge to all of our community, quite a few of you have submitted predictions based on a wide variety of approaches such as Machine Learning modeling, gut feeling, as well as unofficially polling movie critic opinions. We’re happy to report that BigML’s own Alfonso Amayuelas got 15 of 19 picks right as well. Of the participants that didn’t apply ML, Ivana Guraieb Galland got 5 out of 6 picks correct. We’ll continue using this “crowdsourced” approach in the coming years.

History to Date Predictions Performance

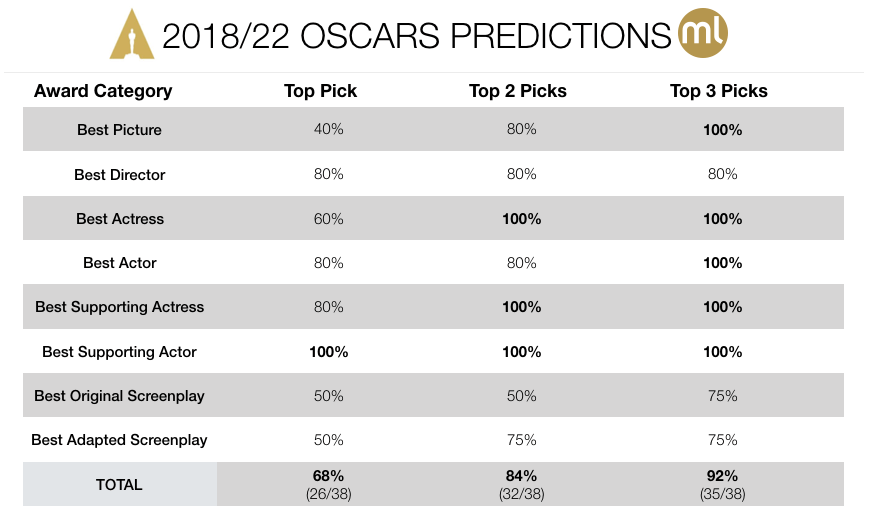

Finally, we’ve also updated the table below that compiles all our predictions between the 2018 and 2022 Oscars and the corresponding hit rates for the major categories. In addition to the Top Picks that we annually shared in our past blogs, this table lists how the accuracy metric improves if we also consider the movies that received the highest two (Top 2) or three (Top 3) scores. The Top Picks alone had an average 68% hit rate, whereas the coverage reached 92% with the Top 3 taken into account.

As the pioneers of ML-as-a-Service here at BigML, we welcome students of Machine Learning to build their own models on top of the public movies 2000-2021 dataset. It’s a great way to put your Machine Learning skills to test quickly without the overhead of having to download and install many open source packages and worry about compatibility issues or hard-to-decipher error messages. It takes 1 minute to create a FREE account and about as much time to clone the movies dataset to your account. As always, let us know how your results turn out on Twitter @bigmlcom, or send us a note anytime at feedback@bigml.com!