In the third post of the series, we looked at the types of models supported by each service. While some are useful for understanding your data, the primary goal of many machine learning models is to make accurate predictions from unseen data. Say you want to sell your house but you don’t know how much it is worth. You have a dataset of home sales in your city for the past year. Using this data, you train a model to predict the sales price of a house based on its size and the year it was built. Will this model be useful for predicting how much your own house will sell for? In this post, I will discuss how a model’s prediction abilities are evaluated, the results of comparing models from each service, and some general observations about making predictions with each service.

As we saw in the previous post, some of the services report cross-validation scores on the models they create. These scores are a measure of a model’s ability to leverage what it learned from the training data to make accurate predictions from unseen data. A better score on your housing price model will give you more confidence that it will accurately predict the sales price of your house.

The key to calculating these scores is to split the rows of the dataset into two parts, a training set and a test set, before training the model. For example, you might randomly select 80% of the housing sales for the training set and the remaining 20% for the test set. The training set is used to create the model and the test set is reserved for computing the score.

Why would you do this? Why not use all the data to train the model and then test predictions on this same data? If you used this approach, the model would have seen all the correct answers during training so it’s bound to perform well. We don’t want to test a model’s ability to regurgitate the training data; we want to check if it can make accurate predictions for data it has never seen.

So the training set is used to train the model. Now how do we use the test set in this example? For each house in the test set, the house size and age are used to predict the sales price without looking at the actual sales price. The predictions are then compared to the actual prices (using some choice of metric) to compute the score.

The Results

All right, let’s get down to business and look at some real numbers comparing BigML to its competition.

For this Machine Learning Throwdown, I used the 10-fold cross-validation method to split the data into training and test sets. The predicted answers are compared to the actual answers using a few different metrics since each one has its strengths and weaknesses:

- Accuracy, Macro Average F1 Score, and Macro Average Phi Coefficient for classification problems

- Mean Squared Error and R-Squared Score for regression problems

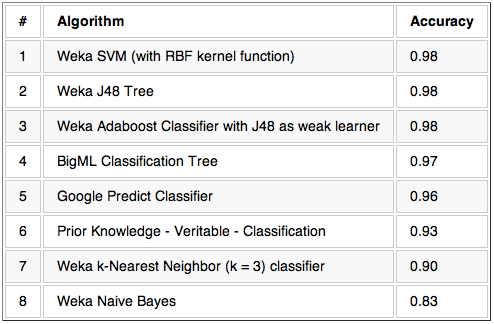

I collected cross-validation scores with the appropriate metrics for each dataset introduced in the data preparation post and each model described in the models post. The results are presented in tables where there is a table for every combination of dataset and scoring metric. Here are the results for the glass classification dataset scored with the accuracy metric. The models/algorithms are sorted such that the top performers are listed first.

The Machine Learning Throwndown details contain the rest of the results as well as details about the datasets. Remember that the datasets are all relatively clean by machine learning standards. Also, these experiments do not exercise some advanced features described in my previous post such as predicting multiple fields with Prior Knowledge or handling strings with Google Prediction API.

There are multiple ways to interpret these results. First, remember that I am primarily interested in comparing the cloud-based services; Weka was mainly included to make sure the other results were comparable to popular algorithms. So let’s ignore the Weka results for now, but note that they are usually in line with the other services as expected.

Now let’s get all Olympic on these results.

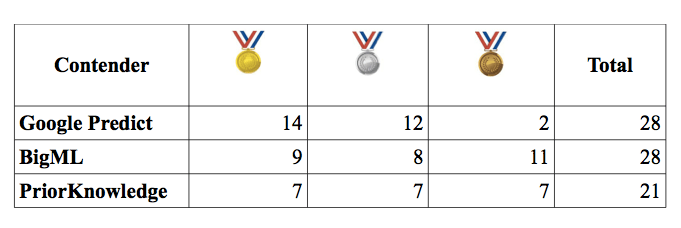

There are 28 tables like the one above. We’ll give Olympic medals to services based on their position in the result tables:

This is a very simple way to look at the data, but it does show that Google Prediction API comes in first more than the other services. But by how much? Let’s look at the accuracy for the classification problems. The accuracy is simply the number of correct predictions divided by the total number of predictions, so it ranges from 0.0 (no correct predictions) to 1.0 (all correct predictions). Looking at the difference between the highest and lowest scores between the cloud-based services for each classification dataset (e.g., 0.97 – 0.93 = 0.04 in the table above), the average is less than 0.04. This shows that these services have very similar predictive performance on these datasets. On the other hand, the top accuracy scores range from 0.76 on the diabetes dataset to 0.99 on the handwriting recognition dataset, demonstrating that the dataset often dictates predictive performance and the choice of model is less important. The simplest models frequently outperform even the most complex while being faster and cheaper to train.

In summary, Google Prediction API comes out ahead in this analysis but not by much. This isn’t too surprising since Google’s service likely trains multiple black box models and chooses the best performer. BigML and Prior Knowledge, on the other hand, focus on single models that provide more insight into your data. Of course there are many other ways to look at the data, as they do in this JMLR paper, for example. I have included the raw results in CSV format along with the rest of the results in case you want to do your own analysis.

Now let’s look at some general pros and cons related to making predictions with each service.

The Services

BigML

Pros:

- Can make predictions on the website without writing code

- Can store models locally to make predictions offline

- Predictions are very fast when they are done offline

Cons:

- No batch predictions (one prediction per API call)

- Individual predictions through the API are slow

Google Prediction API

One thing to note in the results is that Google Prediction API scored horribly on the two regression datasets that have some missing/unknown values. I believe this is because they do not handle missing values well. For example, a missing value for a numeric field is assumed to be zero. Better performance could probably be achieved by replacing missing values with something more reasonable (e.g., the average of all the non-missing values for the field).

Pros:

- Often makes more accurate predictions than the other services

- Individual predictions through the API are very fast

Cons:

- No batch predictions (one prediction per API call)

- Must write code to make predictions (Edit: You can make predictions using their APIs Explorer intended for developers, but you will need to read the API documentation to understand how to use it)

Prior Knowledge

Note that there are no results for Prior Knowledge on COIL or the handwriting recognition datasets. I ran into errors using their API on these datasets and was unable to resolve them in time.

Pros:

- Provides information indicating how confident it is about the predictions it makes

- Supports batch predictions (multiple predictions per API call), but I had to limit them to 100 predictions because I was getting timeout errors with larger batches

Cons:

- Must write code to make predictions

Weka

Pros:

- Predictions are very fast because they are done locally

Cons:

- Must write code to make predictions

Conclusion

That’s it for predictions.

Oh, remember how I mentioned some API errors with Prior Knowledge? It wasn’t the only service that gave me trouble, but that’s a topic for the next post in the series. I will also cover other miscellaneous topics for each service such as cost, support, and documentation.

(Note: Per Dec 5, 2012 Prior Knowledge no longer supports its public API.)

Other posts:

Hi Nick,

You mention that bigML can let you store models locally. How is that done? I’m playing around with a model now and can’t find that option anywhere. Enjoying the series of posts, thanks!

Hey there,

Basically, you will need to use the BigML API to get the model. The easiest way to store/use a model locally is to use one of the libraries that provides convenience methods for this. The “Local Models” section of a recent post (https://blog.bigml.com/2012/08/20/predictive-models-build-once-run-anywhere/) describes this functionality for the Python library.

Not all of the libraries provide convenience functions for local models yet. In that case, you can still get the raw data for the model from the API and interpret it yourself. The model documentation can be found here: https://bigml.com/developers/models

An easy way to look at the raw model from the API is by going here in your browser: https://bigml.io/model/id?username=USERNAME;api_key=API_KEY (obviously replace USERNAME and API_KEY with your info). I’m told there will soon be a link like this from the website when you’re viewing a model.

Reblogged this on Data Science 101 and commented:

The next post in the BigML Machine Learning Throwdown.